A minimal VMM in Rust with Apple Hypervisor

Virtual Machine Monitor

Previously, we implemented a VMM with KVM for an x86_64 processor. This time we'll do the same thing — implement a VMM from scratch — but on Apple Silicon macOS using the Hypervisor framework.

On the surface, KVM and Apple Hypervisor APIs look very similar:

- Create a VM

- Set up memory for it (similar to libc's

mmapandmunmap) - Create a vCPU and set up its registers

- In a loop, run the vCPU and handle any exit events

- Clean up by closing file descriptors (KVM) or calling destroy methods (Apple Hypervisor)

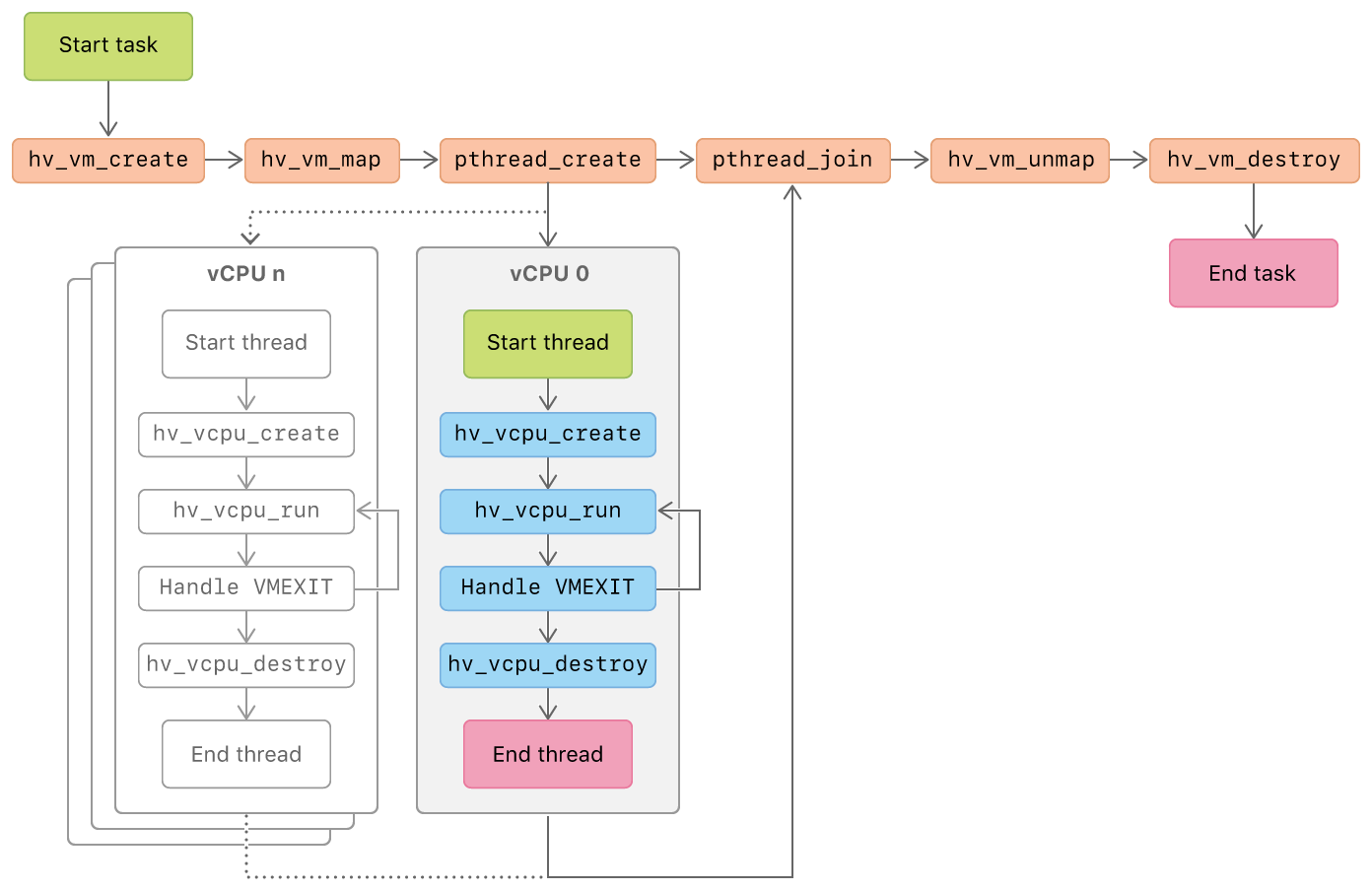

Just see this image from the official Hypervisor docs:

Like before, we'll use minimal external dependencies, but this time we'll go a bit deeper — generate Rust bindings for Hypervisor APIs, use ARM assembly for guest code instead of raw binary, and read the output generated by the guest via a general-purpose register.

Rust Bindings for Hypervisor API

bindgen automatically generates Rust FFI bindings for C/C++ libraries.

It takes a wrapper header file as input. This wrapper file includes the header files of interest — in our case, the Hypervisor framework header:

wrapper.h:

#include "Hypervisor/Hypervisor.h"How do we locate the header file for Hypervisor on macOS? By using the xcrun CLI:

$ xcrun -sdk macosx --show-sdk-path

/Library/Developer/CommandLineTools/SDKs/MacOSX.sdk

# It links to installed SDK version:

$ readlink /Library/Developer/CommandLineTools/SDKs/MacOSX.sdk

MacOSX26.4.sdkFrameworks reside in /Library/Developer/CommandLineTools/SDKs/MacOSX.sdk/System/Library/Frameworks. For Hypervisor headers:

$ ls /Library/Developer/CommandLineTools/SDKs/MacOSX.sdk/System/Library/Frameworks/Hypervisor.framework/Headers/

hv_arch_vmx.h hv_gic_parameters.h hv_sme_config.h hv_vm_allocate.h hv.h

hv_arch_x86.h hv_gic_state.h hv_types.h hv_vm_config.h Hypervisor.h

hv_base.h hv_gic_types.h hv_vcpu_config.h hv_vm_types.h

hv_error.h hv_gic.h hv_vcpu_types.h hv_vm.h

hv_gic_config.h hv_intr.h hv_vcpu.h hv_vmx.hHypervisor.h already includes all the other header files for both x86_64 and arm64, so in our wrapper.h we just need to include this single file.

build.rs

First, we add a build-time dependency in Cargo.toml via cargo add --build bindgen.

Now we have everything to write the build script that generates Rust bindings from wrapper.h into bindings.rs:

use std::{path::PathBuf, process::Command};

fn get_sdk_path() -> String {

let output = Command::new("xcrun")

.arg("-sdk")

.arg("macosx")

.arg("--show-sdk-path")

.output()

.expect("failed to run xcrun -sdk macosx --show-sdk-path");

if !output.status.success() {

panic!(

"failed to get sdk path: {}",

String::from_utf8_lossy(output.stderr.as_ref())

);

}

let sdk_path: String = String::from_utf8_lossy(output.stdout.as_ref()).into();

sdk_path.trim().into()

}

fn main() {

let binding_rs_path =

PathBuf::from(std::env::var("OUT_DIR").expect("OUT_DIR env var not present"))

.join("bindings.rs");

let sdk_path = get_sdk_path();

bindgen::builder()

.header("wrapper.h")

.clang_arg(format!("-F{}/System/Library/Frameworks", sdk_path))

.derive_debug(true)

.derive_default(true)

.generate()

.expect("failed to generate bindings")

.write_to_file(binding_rs_path)

.expect("failed to write bindings.rs");

println!("cargo:rustc-link-lib=framework=Hypervisor");

()

}bindgen uses clang to process header files, so we need to provide the framework search path via the -F flag. Without it, we'd see errors like "Hypervisor/Hypervisor.h" file not found — that's why we resolve the SDK path first.

From man clang on macOS:

-F<directory>

Add the specified directory to the search path for framework include files.

We also need to tell rustc which system library to link against via rustc-link-lib. Without this, cargo build will fail with "undefined symbols" when we use functions from the generated bindings.

We can now use the generated bindings.rs as follows:

main.rs:

mod bindings {

include!(concat!(env!("OUT_DIR"), "/bindings.rs"));

}

fn main() {}The env! macro retrieves an environment variable at compile time, as opposed to std::env::var which reads it at runtime.

The included file is textually inserted into the surrounding code, so we access its symbols like bindings::hv_vm_create.

Create a VM

#![allow(non_upper_case_globals)]

#![allow(non_camel_case_types)]

#![allow(non_snake_case)]

#![allow(unnecessary_transmutes)]

#![allow(improper_ctypes)]

#![allow(unused)]

use std::error::Error;

use crate::bindings::{

HV_BAD_ARGUMENT, HV_BUSY, HV_ERROR, HV_NO_DEVICE, HV_NO_RESOURCES, HV_SUCCESS, HV_UNSUPPORTED,

hv_vm_create,

};

mod bindings {

include!(concat!(env!("OUT_DIR"), "/bindings.rs"));

}

fn main() -> Result<(), Box<dyn Error>> {

/// 1. Create a VM

let val = unsafe { hv_vm_create(std::ptr::null_mut()) };

match val {

HV_SUCCESS => println!("HV_SUCCESS: The operation completed successfully."),

HV_ERROR => eprintln!("HV_ERROR: The operation was unsuccessful."),

HV_BUSY => eprintln!(

"HV_BUSY: The operation was unsuccessful because the owning resource was busy."

),

HV_BAD_ARGUMENT => eprintln!(

"HV_BAD_ARGUMENT: The operation was unsuccessful because the function call had an invalid argument."

),

HV_NO_RESOURCES => eprintln!(

"HV_NO_RESOURCES: The operation was unsuccessful because the host had no resources available to complete the request."

),

HV_NO_DEVICE => eprintln!(

"HV_NO_DEVICE: The operation was unsuccessful because no VM or vCPU was available."

),

HV_UNSUPPORTED => {

eprintln!("HV_UNSUPPORTED: The operation requested isn’t supported by the hypervisor.")

}

_ => eprintln!("unknown {val}"),

}

Ok(())

}The generated symbol names come from C header files and don't follow Rust naming conventions, so we add #![allow(...)] attributes at the top to suppress warnings.

The match statement for the return value is straight from the documentation for hv_return_t.

Running this with cargo run, we see:

unknown -85377017

This is because our VMM must have the com.apple.security.hypervisor entitlement. Let's create an entitlement file:

virt.entitlements:

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE plist PUBLIC "-//Apple//DTD PLIST 1.0//EN" "http://www.apple.com/DTDs/PropertyList-1.0.dtd">

<plist version="1.0">

<dict>

<key>com.apple.security.hypervisor</key>

<true/>

</dict>

</plist>For local development, self-sign the debug binary:

codesign --sign - --force --entitlements=virt.entitlements ../target/debug/minimal-apple-hypervisorNow, if we rerun our VMM:

$ ../target/debug/minimal-apple-hypervisor

HV_SUCCESS: The operation completed successfully.

All Hypervisor APIs return hv_return_t, so to keep things DRY, let's create an hv_call! macro and an HVError struct.

#[derive(Debug)]

pub struct HVError(i32);

impl std::error::Error for HVError {}

impl Display for HVError {

fn fmt(&self, f: &mut std::fmt::Formatter<'_>) -> std::fmt::Result {

write!(f, "{}", self.0)

}

}

impl HVError {

pub fn from_error(err: i32) -> Self {

HVError(err)

}

}

macro_rules! hv_call {

($e:expr) => {{

let val = unsafe { $e };

match val {

HV_SUCCESS => println!("HV_SUCCESS: The operation completed successfully."),

HV_ERROR => eprintln!("HV_ERROR: The operation was unsuccessful."),

HV_BUSY => eprintln!(

"HV_BUSY: The operation was unsuccessful because the owning resource was busy."

),

HV_BAD_ARGUMENT => eprintln!(

"HV_BAD_ARGUMENT: The operation was unsuccessful because the function call had an invalid argument."

),

HV_NO_RESOURCES => eprintln!(

"HV_NO_RESOURCES: The operation was unsuccessful because the host had no resources available to complete the request."

),

HV_NO_DEVICE => eprintln!(

"HV_NO_DEVICE: The operation was unsuccessful because no VM or vCPU was available."

),

HV_UNSUPPORTED => {

eprintln!("HV_UNSUPPORTED: The operation requested isn’t supported by the hypervisor.")

}

_ => eprintln!("unknown {val}"),

}

match val {

HV_SUCCESS => Ok(val),

_ => Err(HVError::from_error(val))

}

}};

}With this, our VM creation is simply:

/// 1. Create a VM

let vm = hv_call!(hv_vm_create(std::ptr::null_mut()))?;Create VM Memory

For this, we need libc for mmap and munmap system calls, so we add our only runtime dependency: cargo add libc.

We ask the system for read-write memory, but when mapping it to the hypervisor with hv_vm_map, we make it read-write-execute.

/// 2 blocks/pages of 16KiB - on Apple Silicon default page size is 16KiB

const VM_MEMORY_SIZE: usize = 2 * 16384;

struct Mmap {

pub addr: *mut std::os::raw::c_void,

pub len: usize,

}

impl Mmap {

pub fn new(len: usize) -> Result<Self, Box<dyn Error>> {

let addr = unsafe {

libc::mmap(

std::ptr::null_mut(),

len,

libc::PROT_READ | libc::PROT_WRITE,

libc::MAP_ANON | libc::MAP_PRIVATE,

-1,

0,

)

};

if addr == libc::MAP_FAILED {

return Err(format!("failed to mmap: {}", std::io::Error::last_os_error()).into());

}

Ok(Mmap { addr, len })

}

}

impl Drop for Mmap {

fn drop(&mut self) {

if !self.addr.is_null() {

unsafe {

libc::munmap(self.addr, self.len);

}

}

}

}

// in main:

/// 2. Create and set up VM memory.

/// Map a region in VMM's virtual address space to guest physical address space

let vm_mmap = Mmap::new(VM_MEMORY_SIZE)?;

let vm_map = hv_call!(hv_vm_map(

vm_mmap.addr,

0,

VM_MEMORY_SIZE,

(HV_MEMORY_READ | HV_MEMORY_WRITE | HV_MEMORY_EXEC).into(),

))?;Copy Guest Code

We want to run the whole VM lifecycle, so we implement simple guest code that does two things:

- Store a value in a CPU register (

x0) - Execute an instruction (

hvc) that causes the VM to exit and returns control to our VMM

It's simple enough that we can write it in assembly and let Rust take care of assembling it into machine code.

/// Guest Code

global_asm!(

r#"

.global _guest_code_start

.global _guest_code_end

.align 4

_guest_code_start:

mov x0, #0x41 // Move a value in general purpose register, so we can see it after vcpu exit.

hvc #0 // Hypervisor call - will cause exit

_guest_code_end:

"#

);

unsafe extern "C" {

static guest_code_start: u8;

static guest_code_end: u8;

}

fn guest_code() -> &'static [u8] {

unsafe {

let start = &guest_code_start as *const u8;

let end = &guest_code_end as *const u8;

let len = end.offset_from(start) as usize;

std::slice::from_raw_parts(start, len)

}

}We place code between two labels exported as global symbols, expose those labels to Rust via extern declarations, and then read the bytes between them as raw data to copy into guest memory:

/// 3. Copy Guest Code

unsafe {

let code = guest_code();

std::ptr::copy_nonoverlapping(code.as_ptr(), vm_mmap.addr as *mut u8, code.len());

}Let's dissect the guest code machinery. We use global_asm! because this assembly isn't scoped to a function — it emits instructions directly into the VMM binary. Inside the macro, we mark the start and end boundaries of our guest code with labels and export them as .global symbols. They need to be .global so the linker knows where they're defined — we then reference them via extern "C" and let the linker resolve them for us.

From the assembly perspective, labels represent addresses — they mark specific positions in the compiled binary. By placing them at the start and end of our guest code, we can determine exactly where it lives in memory.

From Rust's perspective, guest_code_start and guest_code_end are two static variables of type u8 that exist somewhere in memory. Their byte values happen to be the first bytes of the corresponding instructions in binary form.

For our purposes, the declared type is irrelevant — we only need their addresses (&guest_code_start as *const u8 and &guest_code_end as *const u8) to perform pointer arithmetic and obtain a &'static [u8] slice of the guest code.

The leading underscore on _guest_code_start and _guest_code_end in assembly is a macOS convention — the Mach-O object format prefixes C symbols with an underscore.

Create a vCPU

/// 4. Create a virtual CPU

let mut vcpu_exit = std::ptr::null_mut();

let mut id = 0;

let vcpu = hv_call!(hv_vcpu_create(

&mut id,

&mut vcpu_exit,

std::ptr::null_mut()

))?;On successful creation of a vCPU, hv_vcpu_create stores the CPU id in id and a pointer to exit information in vcpu_exit.

Later, when we run the vCPU and handle exit events, the exit details will be available through vcpu_exit.

Set Up CPU Registers

Here we set the program counter (PC) to 0 — the guest physical address where we copied our code — and configure the processor state register (CPSR) to disable interrupts.

/// 5. Setup CPU registers

let set_reg = hv_call!(hv_vcpu_set_reg(id, hv_reg_t_HV_REG_PC, 0))?;

// Set Program State Register to basically disable all interrupts.

// Put the CPU in EL1h (EL1 using SP_EL1) with interrupts masked. Value 0x3c5:

// bits [3:0] = 0101 → EL1h mode

// bit 6 (F) = 1 → mask FIQ

// bit 7 (I) = 1 → mask IRQ

// bit 8 (A) = 1 → mask SError

// bit 9 (D) = 1 → mask debug

hv_call!(hv_vcpu_set_reg(id, hv_reg_t_HV_REG_CPSR, 0x3c5))?;Run the vCPU

Now all that's left is to run the vCPU and handle exit events by inspecting vcpu_exit.

Since we expect hvc to cause the exit, we verify this by checking the exception class in the syndrome register. If it matches, we read the x0 register to confirm our guest code stored the expected value.

/// 6. Run the vCPU

loop {

hv_call!(hv_vcpu_run(id))?;

let exit = unsafe { &*vcpu_exit };

/// 7. Handle vCPU exit event

println!("exit reason: {}", exit.reason);

println!(" physical_address: {:#x}", exit.exception.physical_address);

println!(" virtual_address: {:#x}", exit.exception.virtual_address);

println!(" syndrome: {:#x}", exit.exception.syndrome);

// check that HVC got us here

// https://developer.arm.com/documentation/ddi0602/2022-09/Base-Instructions/HVC--Hypervisor-Call-

// https://developer.arm.com/documentation/111107/2026-03/AArch64-Registers/ESR-EL1--Exception-Syndrome-Register--EL1-

// exception class bits [31:26]

let exception_class = (exit.exception.syndrome >> 26) & 0x3f;

if exception_class == 0x16 {

println!("HVC executed in Guest");

// get the value of x0

let mut x0 = 0u64;

hv_call!(hv_vcpu_get_reg(id, hv_reg_t_HV_REG_X0, &mut x0))?;

println!("X0 from Guest: {x0:#x}");

}

break;

}Cleanup

Let's clean up in reverse order of creation — destroy the vCPU, unmap the memory region, and finally destroy the VM:

/// 8. Cleanup: destroy vcpu, unmap memory region, destroy vm

let vcpu_destroy = hv_call!(hv_vcpu_destroy(id))?;

let vm_unmap = hv_call!(hv_vm_unmap(0, VM_MEMORY_SIZE))?;

let vm_destroy = hv_call!(hv_vm_destroy())?;That's all it takes to run the VM lifecycle from the official docs!

Closing Thoughts

The steps to run a VM lifecycle with KVM and Apple Hypervisor are conceptually similar. While our VM doesn't do anything useful, it's a good exercise to explore various aspects of systems programming.

Try modifying the value of x0 in the guest code and explore the full source code!

Let me know your feedback in the comment section below!